Strolling along a main avenue in a European city, I recently came across a big scene of contemporary retail – the spectacle of a crowd of people outside a shop window, showing such a degree of excitement, cameras held aloft, as is usually only seen before the Mona Lisa in the Louvre.

The reason was another form of modern 'art’: a half-naked model sitting on a chair, surrounded by piles of clothes (the shop was a fashion franchise).

Whether most of the people were gathered at the window because of the nakedness of the model, or the novelty value in this street of identical shop windows, or due to the brand’s message (to make us aware of the excessive amount of clothes we own), it was obvious that in any case one thing had been effective: an eye-catching image.

Table of contents

- How Cinderella found her shoe: What is visual search

- Don’t know what to wear? The infinite possibilities of visual search for users and retailers

- Getting ready for the ball: How to prepare images for visual search

- The best matches for visual searches: Machine learning and artificial intelligence

How Cinderella found her shoe: What is visual search

From the outset, online sales have had to cling to visual appearance as the most persuasive argument available to convince buyers, being unable (as yet) to make use of our other senses.

However, this basic notion is not entirely true: selling and buying has always been a process based on that spark of falling in love with something you see. No-one feels persuaded to buy a briefcase just because of a friend’s description of it, or decides to buy a dress because of the softness of its fabric (what about the cut, the length and the size?), or to eat a mouthful of food just by smelling its aroma (but what if it’s an insect?).

→ Looking for the perfect shop window? Tips for fashion marketers

To a large extent, all of those ideas are somewhat flawed. There are many present-day experiences that challenge our dependence on the sense of sight and reveal how unfairly constructed the world is for blind or visually impaired people. However, both for people with a well-developed sense of sight and for retailers who are not yet able to benefit from immersive technologies, seeing something continues to be the key factor.

“50-80% of e-commerce retailers new customers come from visual search. As humans, we buy by experience, and visual is one of the closest experiences we can have to trigger the imagination. These are already mature and proven buying channels for customers. It's absolutely important to leverage it to grow your business.” | David Jaeger, Result Kitchen Founder

The basic reason is very simple: for the human brain, the step that comes before desiring something is looking at it, and it only takes 13 milliseconds to recognise an image. Since most purchases that follow from online searching, are based on a desire or a whim derived from a more useful purpose (I was looking for a pestle and mortar, but now I've convinced myself I want a model that’s in green marble), both still and moving images are the most valued raw material in online sales.

However, the sector needs to go a step beyond such traditional methods as leafing through a catalogue, watching an ad on TV, or taking note of something advertised on an underground poster. Until recently, online searching and buying was not very different from those methods, although it did entail the extra comfort of being able to pay and order instantly. Even if results were visual, searches remain textual and passive: the user has no control over precisely what images he wants to be shown.

Visual search has come along to solve that need and to give online shoppers more control, personalisation and interactivity. Finding the perfect product will no longer be like playing “Where’s Wally”.

The 'Shop the Look' effect

A few years ago, the novel option of combining aspects of fictional media with online shopping was hailed as revolutionary: what if, while watching the latest James Bond movie, you could buy the Rolex worn by Daniel Craig just by clicking on the screen?

Although this idea has given rise to a large number of applications which recreate the clothing outfits of characters from a series and allow the buyer to locate such garments, in reality their freedom of choice remains minimal.

On the one hand, this strategy means that a content creator has to depend on such brands as might have offered to supply their products for the film or series (classic positioning); while on the other hand, although the product shown might have convinced the user to buy, he or she will then be shown no alternative to that specific model of that particular brand.

The real revolution in visual search is that the user gains power over the results, in a more refined way than through written or dictated words. The value of enriched data for searches and online retail both now and in the future is indisputable: well-structured content facilitates positioning in search engines and improves the experience to make it more personalised and precise. And this product content will eventually be reflected in key images of visual searches.

→ Related: 10 ways to improve your customers shopping experience

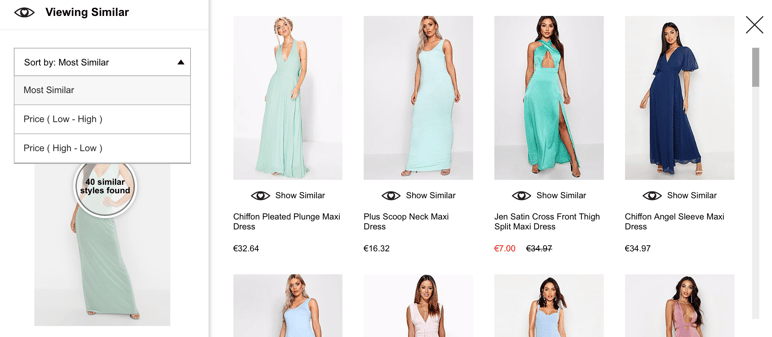

In simple terms, visual search is the ‘Shazam’ of photographs: it empowers the user to carry out an online search based on a reference image. And the positive thing about this method is that it no longer restricts the customer to buying only the Rolex MI6 collection. Users can find products similar to that in the image by applying different filters and ranges, and retailers can see an increase in new visitors and buyers thanks to suggestions for products related to other brands.

All you need is an image whether snapped with your mobile phone camera, downloaded from the Internet, or saved on social networks. By entering it into a program or search engine, the user finds out what products are available that match that image exactly, or that are similar, cheaper or more expensive.

This system takes advantage of the buying impulse, because the sooner you find a product that’s available, the more likely it is that you’ll decide to buy it without pausing to think about similar, perhaps even more convincing, options. By using visual search, the shopping journey is shortened and becomes more efficient, eliminating the restrictions and frictions of the classical method.

It puts an end to the noting down ideas for later, browsing, checking and comparing manually until the user feels frustrated if there are no matching results; or if they don’t know which shops or marketplaces to make comparisons across, or don’t know how to express properly in writing a description of the product they want. According to some studies, visual searches result in twice as many purchases as text searches, while 62% of new-generation users prefer visual search; it's time we adapt to them.

Who saw it first? The technological career of visual search

The year that’s usually marked as the official starting point for effective visual search is 2017. That year, software companies specialising in visual search tools and extensions appeared on the market, as well as plugins on well-known platforms, starting with that kingdom of imagery: social media.

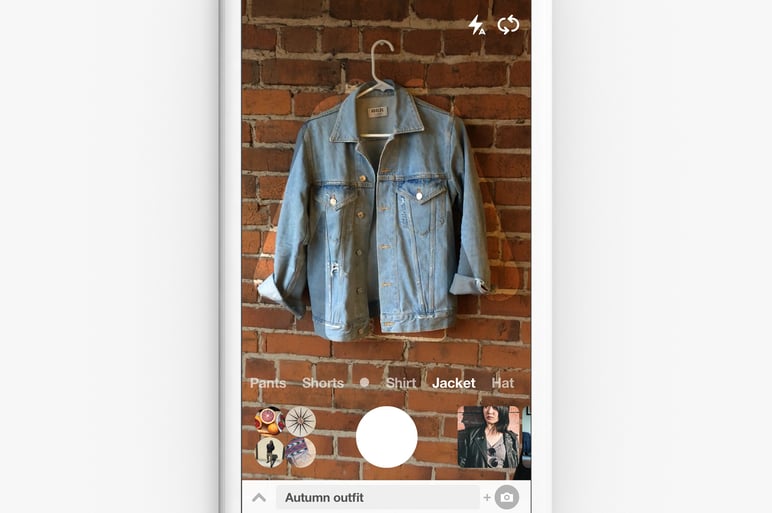

- Pinterest: In 2017 Pinterest launched Lens, a tool based on visual discovery, within its app. The need was already present: a Pinterest user will open the platform in search of inspiration, and the longer they spend browsing there, the better. However, sometimes you have to give the user what he wants, that is, a very specific result. Lens would allow you to photograph any object to immediately see Pinterest images matching or complementing it, like outfits inspired by the handbag you’d just snapped. Since its launch, Pinterest says that Lens has an annual growth of 140% and more than 600 million visual searches per month. “The future of search will be about pictures rather than keywords" says its CEO, Ben Silbermann.

- Amazon: The marketplace giant was not going to be left behind and shortly after, it launched the Spark option for Prime accounts, based on interactive images of marketplace products, just as Instagram would later make very popular.

- Google: Google Image may be the most popular search tool (it has 22.6% of the market, after Google), but it’s not the primary option for online shopping. In order to improve it, Google launched badges on images that linked to other content, anything from videos or recipes to product-buying pages. Later on, Google Lens enabled searching for items similar to an image from among products for sale on Google Shopping.

- eBay: Using machine learning systems, eBay launched a visual search engine similar to the above-mentioned, which would also guide the user to products for sale on eBay based on any image or part of an image.

- Instagram: The definitive 'Shop the Look' was popularised on what is today the largest network for inspiration and shopping. For both ads and images published within a user profile, Instagram images can be accompanied by badges that show the price of each product and link directly to a product page, known as shoppable images.

The limitation of visual shopping using badges is, as we mentioned earlier, that they do not facilitate comparison. However, many apps and online tools that develop artificial intelligence technology in the service of visual searches can follow several options at once, as do the flight comparison tools.

Most of them work best for interior decoration, clothing garments and food: that popular triad on social media. But as the technology is refined, so will applications spread. What if someone can find on Tinder, a person resembling the model he saw and photographed in a shop window? Ethical questions also need addressing.

Don’t know what to wear? The infinite possibilities of visual searches for users and retailers

Retailers love to have the backing of figures when a new technology emerges, and in the case of visual search they are unparalleled: Gartner predicts a 30% increase in sales for those who adopt this technology before 2021, and there are already success stories with an increase of between 20% and 50% of sales, such as Forever21 and Nike, according to Oliver Tan, CEO of Visenze.

Undoubtedly, for the fashion industry visual search is an essential accessory, along with shoppable images, new 3D and augmented-reality technologies that create virtual fitting rooms to try for size, and digital assistants such as chatbots.

→ Fashion marketer? Follow these 7 digital trends

What stages are available in the visual search process?

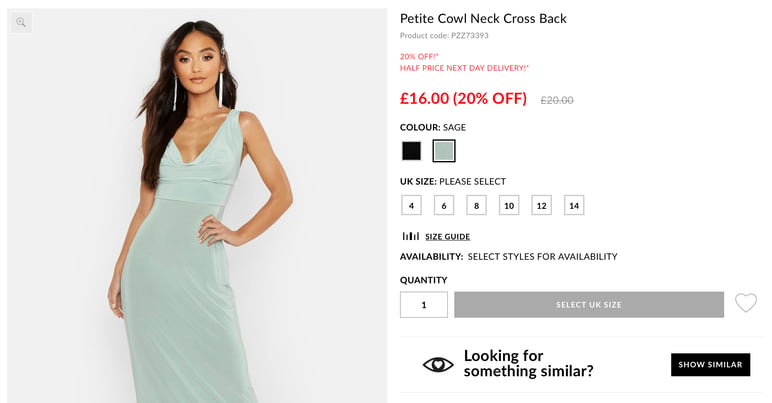

- Finding a garment from a photograph, such as a wedding dress. Some applications can search both websites in HTML and online sales channels in order to accurately inform the user as to how many stores that particular item is available in and at what prices.

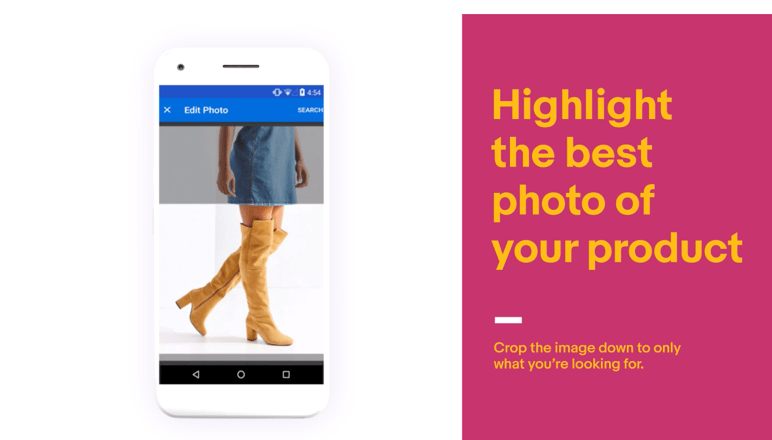

- Finding a particular detail of an image. With a cropping or zoom tool, the user can indicate that he is only interested in searching images similar to a certain carpet in the photo of a living-room.

- Searching for accessories. As an indirect search, this aspect opens up the true range of possibilities and advantages of visual search for retail . The user can find suggestions for what clothes to combine with a jacket or which cushions would look best on a sofa.

- Searching for products using spatial reference. Very popular in furniture applications such as IKEA or Amazon Showroom, this combination of image recognition and augmented reality offers suggestions for pieces that can fit within specific dimensions, such as a terrace or a set of shelves.

Finding the key product from a photograph is a big step forward for the user, but for retailers even more attractive is the possibility of related content, whether in search engines or in the internal visual search options of the website or app.

“An advantage of visual search is that it relies entirely on item appearance. There is no need for other data such as bar codes, QR codes, product name, or other product metadata.” | Brent Rabowsky, Amazon Web Services Machine Learning Specialist

Uses and benefits of visual search for sellers

- Improving the shopping experience and, therefore, user satisfaction and loyalty.

- A more efficient shopping journey: The 'Like' moment can be instantly transformed to 'Pay' and the steps to completing a sale are reduced, as recently demonstrated by Instagram including its 'Checkout' option directly from the image itself.

- Reducing customer loss: As they find what they want more quickly, the likelihood of customers abandoning the search is reduced.

- An increase in sales: Many results indicate that obtaining more accurate search results and complementary suggestions, increases the average spending of each user.

- Investigating copies and copyright violations: Using reverse visual search, you can locate places where brand and catalogue images are being used without permission.

- Ads that are more specific and are linked to the appropriate channels: For example, if someone has searched for a coat of a certain singer in a photo, ads can be placed on Spotify to show when they open that musician's tab.

- Better information and more enriched profiles about customers: Not only will you know what they like about your product category, but also what other things inspire them and might be related to your catalogues, so as to find new positionings and refine each future visit to your website or app.

Uses and benefits of visual search for buyers

- Locating image sources: sometimes knowing what brand a dress is, can help the user discover a new favourite store.

- Locating better images: from a low quality photograph, others can be found at higher resolution, providing more detail.

- Direct link between physical and online shopping: the gap between seeing something and being able to buy it is reduced, for instance by knowing if a store is closed, if there are no sizes available, or if there are no franchises nearby; or when you are leafing through a magazine, such as Elle magazine's option, which combines image recognition and shopping tags from your camera without the need for an app.

- Discovering new items: visual search offers thousands of related results that the user would never have found on their own, nor as speedily.

- Eliminating obstacles: the user doesn’t need to pause while translating her wish into the sort of language a computer can understand, choosing the most appropriate words for a search engine. What if she is not able to describe the texture of the particular fabric she wants or a particular shade of blue? The image shows everything just as it is, without effort.

- Saving time: when searching for a reference item, the user saves having to skim through dozens of pages and galleries until they find what they are looking for.

- New ideas: the refinement of artificial intelligence brings the added advantage of being able to compare different options, budgets and accessories.

- Lack of stock no longer a problem: if that specific product is out of stock, visual search can check for other channels where that item is sold, or suggest similar products of the same or a different brand.

- Direct connection with customer service: many chatbots incorporate visual search tools to help users find a product or accessories without having to browse the entire catalogue.

Of course, this technology has its limitations too. The results are not yet 100% reliable, because some refinements are missing, such as being able to apply filters that avoid showing suggestions for cheap copies; neither can the whole Internet, nor excessively broad catalogues, be searched, apart from there being certain ethical implications.

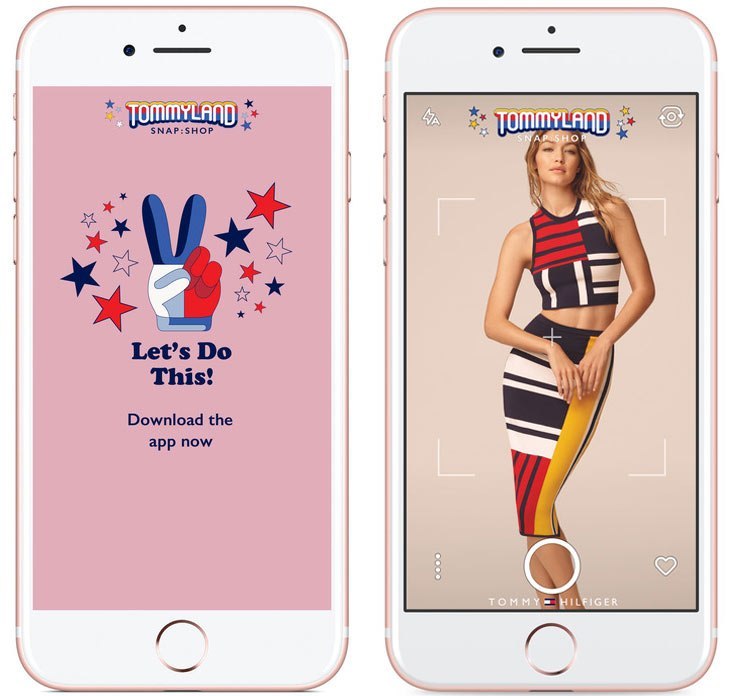

It would be questionable if someone were able to upload the photograph of an unknown person, snapped on a street or in a café, that resulted in it being stored in some online database or record. This may be acceptable where models working in the public sphere are concerned, such as the app that Tommy Hilfiger offered in 2017 to identify garments from catwalk shows, but its use with anonymous people or even children could lead to a lot of problems.

Getting ready for the ball: How to prepare images for visual search

Visual searches may sound great, but how can you ensure that products from a catalogue or store will be included in the results? For the moment, the recommendations are not very different from those that should already be applied in order to improve the positioning of images in text searches.

- Image name: you must use descriptive keywords, especially of the more outstanding visual elements in an image, as well as the brand.

- Alt tags: a thorough description should be included in this section, as specific as possible to help identification and improve organic visibility, including the serial number or SKU if it has one.

- Adequate resolution: this will depend on each channel and use, but normally for visual searches you need images of sufficient quality to be able to enlarge them without pixelation. This can cause problems for internal visual searches on a website or app since it slows down the loading of results, so a balance must be found.

- Structured data: monitor product codes and their location to help search engines correctly identify the content of each page or record. Using the product type format of schema.org and including details of at least the name, image, price, currency and availability, are recommended.

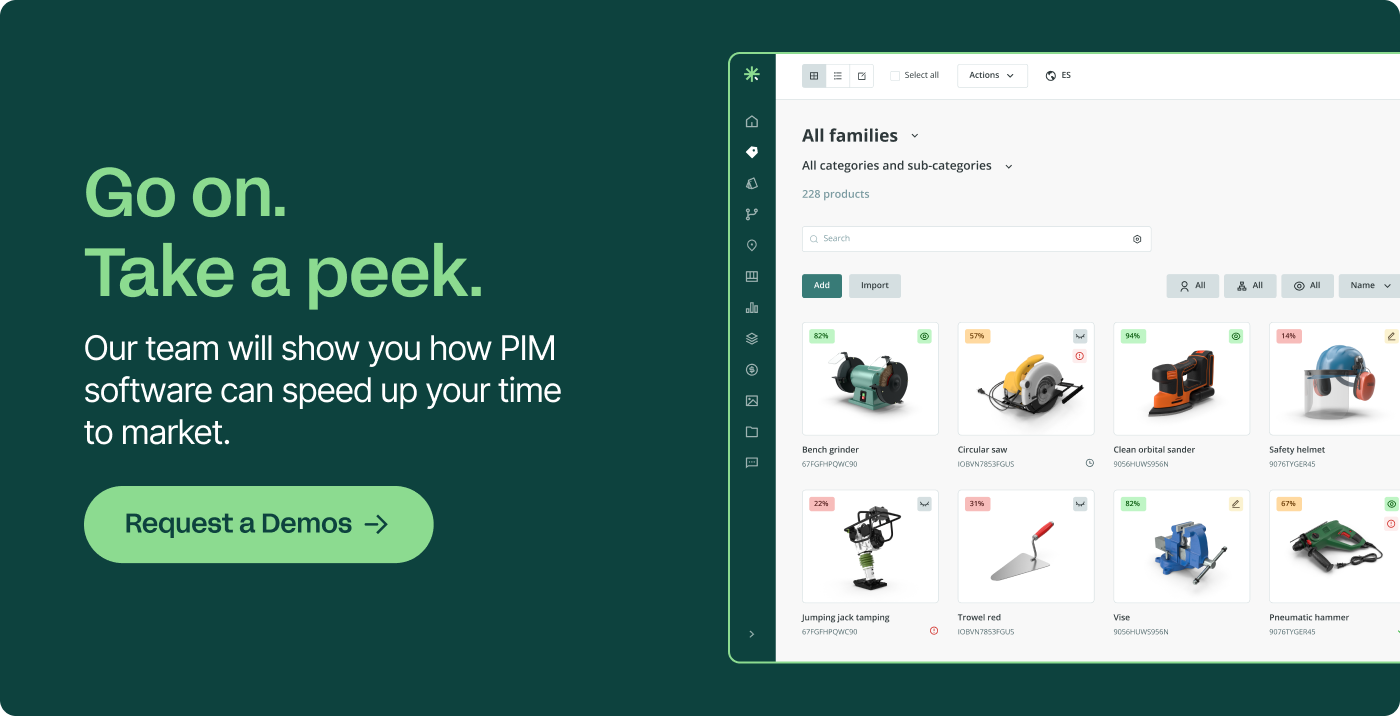

- Optimised product content: The more up-to-date and complete each product’s content is kept, including data, metadata, titles, descriptions, lists, images and other attributes, the more relevant the product will be in both its category and the search results. Product Information Management technology is the best ally for visual searches. Having the product content ready is the best strategy for positioning whether in traditional searches or in any new, future option.

“Now, retailers can use computer vision technology to interpret the style of their inventory, as well as its size and color. That creates entirely new ways to curate an e-commerce store and, in turn, a significantly better experience for the customer. For their part, the customer can find products in those moments when they would previously have thought, 'I know what I want, but I don't know how to describe it.’ | Clark Boyd, Digital Marketing Consultant

The best matches for visual searches: Machine learning and artificial intelligence

As we have already indicated, many platforms and brands develop their own internal visual search system in their web pages and apps, although the product content must always be prepared so as to appear in the results of other search engines.

Some systems are very simple, such that by photographing a product the app leads to the product profile of the website, such as Lush. But by using the metadata of an image, any system based on artificial intelligence can detect which visual results are really relevant, based on similarities of form, colour, pattern and other elements that are, so to speak, eye-catching.

For example, the well-known Macy's, Mark & Spencer or ASOS chains allow you to search for products from their catalogue based on any photo that you upload to the app. However, developing this type of proprietary or in-house technology can be quite expensive, especially if the catalogue is very extensive. For this reason, many brands use Pinterest Lens as a substitute for their own visual search engine, such as the Target clothing brand.

→ Don't feel a bit lost: Start your own product management strategy here

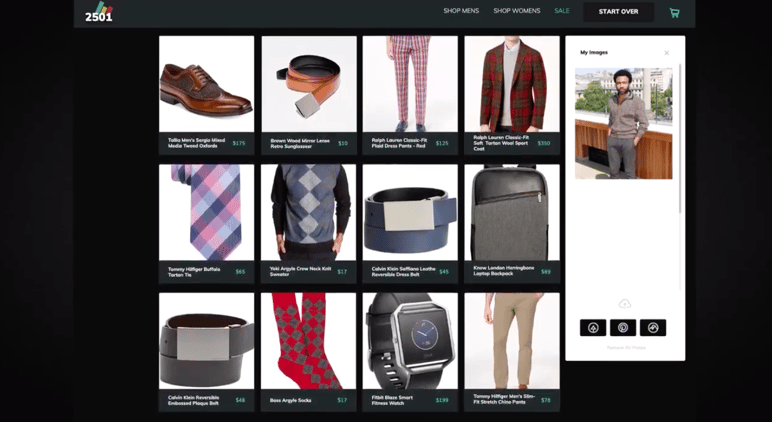

Developments like these by specialised companies are the most interesting, as they go a step beyond searching for copies of an image, such as IBM Watson or Clarifai. For example, Synthetic's Style Intelligence Agent (SIA), developed with Adobe Sensei, is a discovery service that uses artificial intelligence to help the user complete their outfits of clothing and discover many possibilities that might complement a single image.

This means that the system can not only look for results similar to a photo, but even deduce (or recognise) patterns, and search for products across different categories; such as clothing inspired by the style of certain artistic paintings, interior design relating to images of natural landscapes, or products that match other items they already own. Do you want a dress that resembles Van Gogh's Starry Night, an armchair the colour of the Serengeti, or a washing machine that matches your red toaster? Just upload a photo, choose the desired filters and the robot will do the analysis for you.

While these services may be incorporated into brand catalogues, visual search apps such as CamFind are already appearing that will search multiple brands and results online: a really interesting option for buyers who are tired of comparing manually, on Google and Amazon.

Other more futuristic options, that we shall be seeing from 2020 onwards according to technological experts, are scanners to compare prices between different stores (as Walmart is currently testing) and mirrors in physical shops capable of measuring the dimensions of a customer to offer them the best suggestions of clothes in stock.

In both the online and offline worlds, the trend seems clear: to save the customer the tedium of searching digital and physical shelves, and to personalise the service of recommendations as much as possible.

For retailers, this push forward of artificial intelligence into visual search tools signals the need to be quick in its implementation, because unless the AI system can scan 99% of an inventory, the results will not be as accurate nor will the option be as attractive to the buyer. To link so many articles to a new system, companies must act in time and with sufficient organisation.

“One of the most important trends in marketing is personalization. The ability to arm your customers in a retail setting with visual search is an opportunity to take personalization to the next level. So embrace it. Invest in it. And unlock the power of both personalization and visual search before the competition does.” | Ross Simmonds, Digital Marketing Strategist

Final thoughts

The applications of these technologies of visual search and image recognition are endless, from recognising plants to identifying people in security systems, but without doubt it is in the retail sector that it has become a favourite talking point.

The advantages of implementing visual search in a website or app, apart from appearing in visual search results, are outstanding: greater customer loyalty, increased sales, and improved benefits in the short and medium term. The key is to adapt the type of visual search to the customers’ most common goals and to the manner in which they tend to search, use and buy products. And in this new positioning, an image can be worth more than a thousand keywords.